The main way we interact with computers didn't change much. I still use primarily a keyboard and a mouse to tell my computer what to do. These interfaces didn't evolve over the last few decades other than becoming more ergonomic and comfortable to use. New forms like touch screens or trackpads seem like evolutions of it, but the concept still didn't change.

Voice assistants drove the evolution and an alternative but didn't have the adoption expected. Microsoft is abandoning Cortana and there are rumors about the high expense and low return of Amazon with Alexa. The actions that you can perform seem also pretty limited and focused on navigating and consumer applications.

The speed we create on the Internet is limited by the interfaces we use to communicate with computers, and right now there is a bandwidth problem where everything that exists is programmed, designed, and typed manually by humans. Well, that's about to change.

The next era of computing will be defined by natural language interfaces that allow us to tell our computers what we want directly, rather than doing it by hand.

The new AI technologies available to create Large Language Models, and the Transformers architecture that I described a few weeks ago are opening a new set of capabilities that enable the clearest framing of general intelligence: a system that can do anything a human can do in front of a computer. A set of foundation models that can create and perform actions, trained to use every software tool, API, and web app that exists. Our job will be talking to AI Models and they will be Making Anything With AI, we will only have to ask for it.

Text-to-copy

By now I'm sure you heard about GPT-3 from OpenAI. This is an Artificial Intelligence trained to write original, creative content. GPT-3 is just one of the many models out there that can generate text. You can use this AI to do things such as:

- Write Blogs, Emails, and Social Media copy

- Create SEO-optimized content

- Write for you in any language

The text generated by GPT-3 has such high quality that it's difficult to tell whether or not they were written by humans, with both benefits and risks. Last week, OpenAI announced a new version of their model DaVinci. This latest model, davinci-0003, improves on its predecessors by handling more complex instructions and producing longer-form content such as songs or limericks which haven't been possible before with previous versions in this series.

This is transforming the copywriting industry and several startups are making huge moves. Just to name a few... Copy.ai, Jarvis, Rytr, Lex...and marketers are loving them. They also received love from VCs and raised sizeable rounds. The last one is Series A $125M for Jarvis, and they evolved to also include Image Generation, a one-stop-shop for content creators.

Text-to-image

Text-to-image models are powerful LLMs that can produce results that are strikingly human-like in terms of the capability of identifying text captions and generating imagery that fits the phrase.

For example, OpenAI released DALL·E, now in version 2, as an AI system that can create realistic images and art from a description in natural language.

The main three image generation AI platforms are DALL·E from OpenAI, MidJourney, and Stable Diffusion from Stability.ai. This last one decided to make the community very happy and open source the model, and recently released V2 of it, which provides very high-resolution and detailed images, especially for faces.

This image generation technology is revolutionizing digital art creation, it is helping brands brainstorm and iterate quickly new product ideas or Hollywood and game studios can create new worlds and characters faster than ever before.

Also, many new products are adding these capabilities to their suite. Canva just announced they are embracing generative AI tools to bring these capabilities to business users. Microsoft announced a new product called Designer, powered by DALL·E. Shutterstock, the famous gallery of images for content creators and marketing, is also adding AI-generated images to its portfolio - don't search for what you want...just create it!

Microsoft Designer announcement video

Another incredible feature to highlight is outpainting, it enables users to extend the boundaries of an image by using natural language descriptions. With this technology, you can add visually stunning elements in a unified style or take stories down unprecedented paths.

Outpainting feature using DALL·E from OpenAI

This is not research technology. The APIs and models are available for everyone and freelancers and new startups are also creating new companies and services - very profitable from day one. I would like to highlight as an example AvatarAI, which will generate 120+ avatars based on your own picture in different styles. You can now turn yourself into anything you want...and this can challenge photo studios or professional photo editors. The creator, levelsio , is building in public on Twitter and sharing his journey and his experience working with these models and how to fine-tune them to get the desired results.

Just like with real photography, you have to make 30-300 photos to get one really good one. The hit rate is about 10-20% with for me. So I generate at least 8 pics for each AI Photograph.

Levelsio is at MRR of $100k+/mo with AvatarAI, a new service released a few weeks ago. He also released InteriorAI.com, which will create interior design mockups and virtual staging using AI.

Marc Köhlbrugge took the same idea but implemented the feature in a native Mobile App called Lensa. It is available on the AppStore and is it making $1M every day. The main difference was the way he implemented the AI, which was cheaper (no 3rd party API) and could reduce the offering price. He was very aggressive on paid ads but most importantly, the mobile interface increased adoption exponentially more than a website.

it confirms my thesis that "normal people" don't know how to upload photos to a website.

The real opportunity in AI for most people will not be only in the AI itself but in building a good front-end around it.

Text-to-video

In the previous section, we've seen how to generate images from text. Now the AI community cannot stop there, let's make it move! Last September, Meta AI Research Team announced Make-A-Video, a new AI system that lets people turn text prompts into brief, high-quality video clips.

You can also take static pictures and add motion or fill in the in-between motion to two images.

Google also demoed its AI system which is still in its development phase, but the company says it will be capable of producing 1280×768 videos at 24 frames per second from a written prompt.

Text-to-Avatars

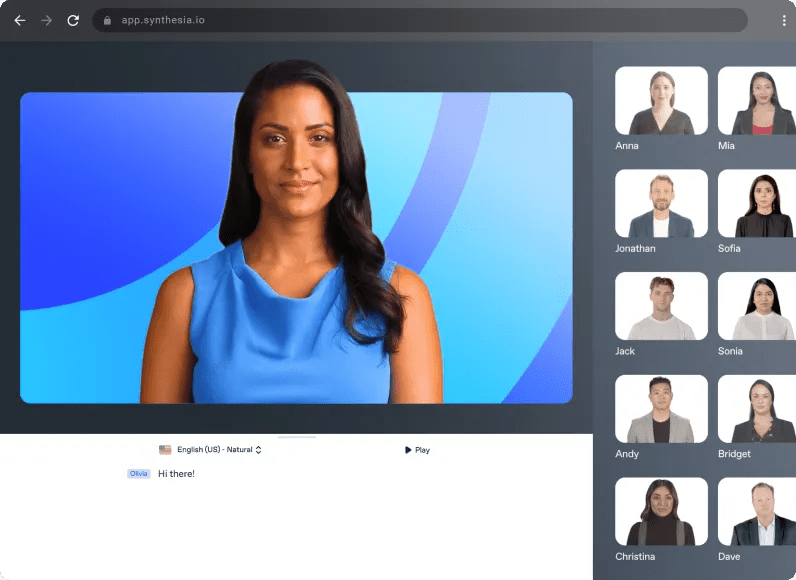

AI avatars are digital twins of real actors. You no longer need to worry about being on camera. Companies like Synthesia provide a diverse catalog of pre-built avatars and technology to create your own. Then just type your text to add a voice in any language and generate videos quickly. No need to hire actors, 3rd party video agencies, expensive video equipment, or complex video edition.

Simply type in your text. The text-to-video maker will automatically create a voiceover for your videos with your avatar of choice. The creation of AI Avatars is also getting easier, with tools to scan your face using your phone or with a simple selfie.

Text-to-3D-model

You can create images, add motion to them and create videos but what about turning them into 3D? Well, that's also happening with text-to-3D models. This is the beginning of a new era to create objects, characters, and worlds for video games, movies, and applications in the Metaverse.

These models are a bit different. It is not possible to train a large model for 3D because it doesn't exist (yet!) a large-scale dataset of labeled 3D Assets and text pairs. The approach here is to use a pre-trained 2D text-to-image diffusion model to perform text-to-3D synthesis. This approach introduced by Dreamfusion requires no 3D training data and no modifications to the image diffusion model, demonstrating the effectiveness of pre-trained image diffusion models as priors. Check out here for a gallery of interactive examples.

A researcher from Peking University released Stable-Dreamfusion - a PyTorch implementation of the text-to-3D model Dreamfusion powered by the Stable Diffusion text-to-2D model (GitHub repo). Given a caption it will generate 3D models with high-fidelity appearance, depth, and normals.

Given a caption, DreamFusion generates relightable 3D objects

Researchers from Tel Aviv University introduced Motion Diffusion Model (MDM)—a generative model that creates natural-looking human animations from textual inputs and existing animations (Marketpost)

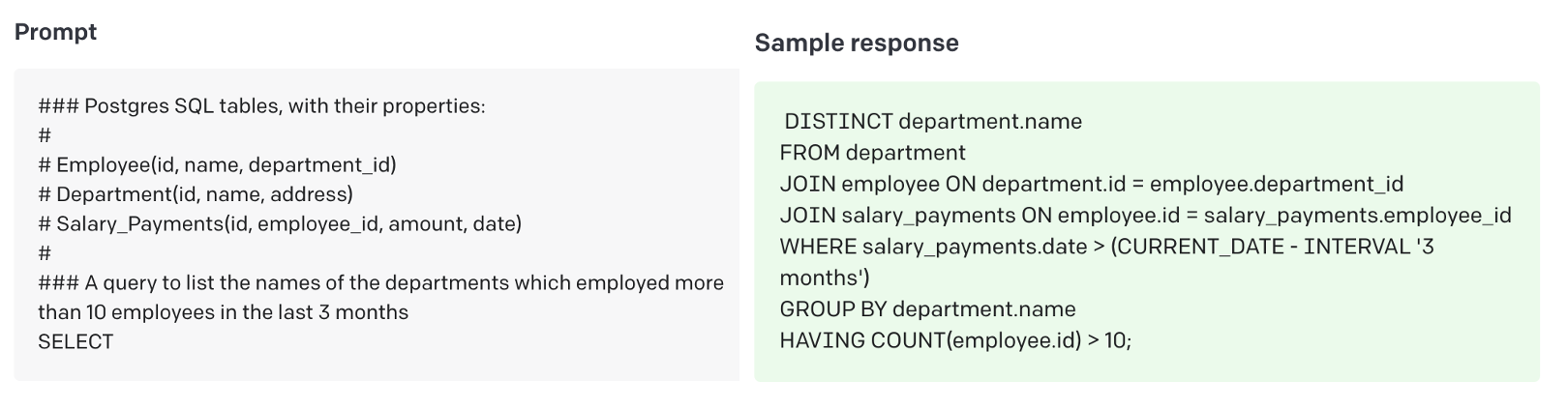

Text-to-SQL

Analysts use their SQL coding skills to explore various aspects of all business. Writing complex SQL queries is hard for non-engineers and writing them from scratch is time-consuming.

Ask whatever questions you need in plain English, and GPT-3 will return the answer as SQL code. There are services like AI2SQL developed on top to provide a user-friendly interface to access this technology.

Text-to-code

Last year, OpenAI Codex revolutionized coding for developers by introducing a system that translates natural language into code. With this groundbreaking technology, programmers can now easily craft programs from simple English instructions.

OpenAI Codex has evolved from its predecessor GPT-3, taking in a wealth of public data and billions of lines of source code, including code in public GitHub repositories. GitHub released Copilot, an AI Pair Programmer based on Codex. It suggests code and entire functions in real-time, right from your favorite editor. This technology is available in the most popular IDEs such as Visual Studio, Neovim, or JetBrains.

IBM released in 2021 Project CodeNet, one of the largest annotated datasets with approximately 14 million code samples, each of which is an intended solution to one of 4,000 coding problems. This kind of dataset streamlines the process of training AI models to drive algorithmic innovations in AI for code tasks like code translation, code similarity, code classification, code search, etc.

This technology allows developers to stop wasting time on mundane coding and syntax tasks and start spending more of it creating software. It is also challenging Q&A sites like Stackoverflow.

Text-to-music

There are also efforts to generate AI that creates music given some simple inputs. Stability AI is funding an effort to do so. Harmonai is using similar generative AI techniques that we described above for image generation. They released Dance Diffusion, an algorithm and set of tools that can generate clips of music by training on hundreds of hours of existing songs. It is possible to combine various musical genres together. By using "style transfer" to create covers, surprisingly realistic results are achieved.

Some startups like Boomy allow you to pick a style, customize multiple parameters such as tempo, density, or instruments and in one click publish it on the most popular streaming platforms like Spotify.

Google's recently revealed AudioLM is a revolutionary AI tool with amazing musical capabilities. It can spontaneously compose piano pieces from small snippets of playing, but as yet remains unavailable to the public in an open-source format.

AI to generate music is still in the early stages but research is already starting to show promising demos and new models. Expect this to evolve fast in the next coming years.

Text-to-web-browsing

Adept AI introduced last year ACT-1, Action Transformer, their first large model. This model is trained to use digital tools such as web browsing. It is connected to a Chrome Extension and allows one to see what's happening in the browser and then it can take actions for you such as clicking or scrolling.

In their announcement blog, there are amazing examples where the user types in a text box and ACT-1 does the rest. For example, tasks that can take 10+ clicks in complex tools such as Salesforce, now it can simply be done with one sentence. The model doesn't know how to do everything but it is highly coachable.

Check examples of ACT-1 from Adept AI

Adept makes some bold predictions such as:

- Most interactions with computers will be done using natural language, not GUIs.

- Beginners will become power users, and no training is required.

- Documentation, manuals, and FAQs will be for models, not for people.

- Breakthroughs across all fields will be accelerated with AI as our teammate.

I agree with all of them.

Text-to-Ideas

A few days ago, the world went crazy with ChatGPT and it reached 1 Million users in just 5 days. ChatGPT is great to brainstorm ideas and get some advice on specific subjects. In the next example, you will see how the AI system can generate new business ideas, and also elaborate a GTM plan, a hiring strategy, or an SEO strategy. The possibilities are endless, and accuracy is pretty good.

Apart from YC and very few other other programs, a lot of startup accelerators are a waste of time and sometimes a net negative.#ChatGPT is probably much better to brainstorm ideas and get some advice on specific subjects, e.g.

— Mourad Mourafiq ☕️ (@mmourafiq) December 7, 2022

1/ idea discovery pic.twitter.com/bAb55a1qe4

In this next example the user is asking ChatGPT to make a movie, and then uses the descriptions of the characters generated by the AI as input to MidJourney to actually create the images of them.

'#AI, make me a movie.' 😳 pic.twitter.com/pfCV5Vq5YF

— Guy Parsons (@GuyP) December 2, 2022

These tools won't replace authors but sure gives them superpowers and a headstart. Ultimately it will help brainstorm early in the process of creation and interaction and then we put the human touch on top. I can ask ChatGPT for ideas for blog titles or topics, but at the end of the day, it will be my human judgment that decides what we use.

Wrapping up

It is amazing that we are living in this time right now, we are able to see all these AI tools evolve in front of us and get better and better every week. These tools are very impressive and powerful but are just that, tools.

Generative AI refers to a type of artificial intelligence that is able to generate new content, such as text, images, or audio, based on input data. This technology has a wide range of potential applications in business, including:

- Automated content creation: Generative AI can be used to automatically generate written content, such as articles, blog posts, or product descriptions, based on a set of input data. This can save businesses time and money by reducing the need for manual content creation.

- Personalization: Generative AI can be used to personalize marketing and sales messages based on a customer's individual preferences and characteristics. This can help businesses to increase engagement and conversions by tailoring their communications to each customer's needs.

- Improved customer service: Generative AI can be used to automatically generate responses to common customer inquiries, such as questions about products or services, shipping, or returns. This can help businesses to improve their customer service by providing quick and accurate responses to customer inquiries.

- Enhanced creativity: Generative AI can be used to generate new ideas, concepts, or designs based on a set of input data. This can help businesses to tap into their creative potential and develop new products, services, or experiences that are unique and innovative.

Overall, the potential applications of generative AI in business are vast, and the technology has the potential to revolutionize a wide range of industries and sectors. However, there are a few potential dangers associated with LLMs, including:

- Misinformation: LLMs are not capable of fact-checking or verifying the accuracy of the information they generate. As a result, they can easily spread misinformation or fake news if they are not used carefully.

- Bias: LLMs are trained on large amounts of text data, which can contain biases based on the sources and authors of the data. As a result, LLMs can perpetuate and amplify existing biases in their output.

- Lack of accountability: LLMs are algorithms, and as such, they do not have the ability to take responsibility for their actions. This can make it difficult to hold LLMs accountable for the things they generate, which could lead to harmful or damaging content being produced without any way to control it.

Overall, while LLMs have the potential to be incredibly useful tools, it is important to use them carefully and responsibly to avoid these potential dangers.